Wake Words for Activating Software

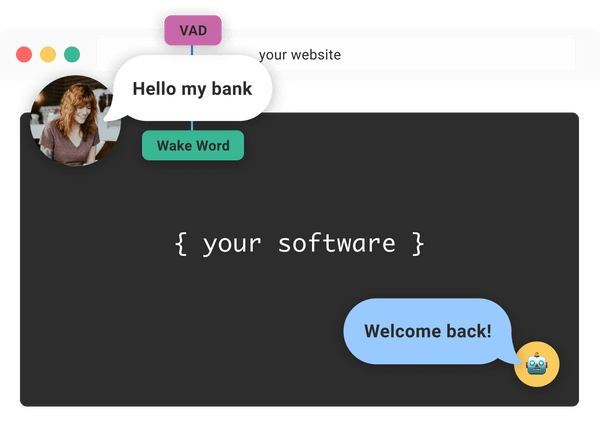

Multilingual on-device wake words recognize one or multiple commands to activate listening in your software.

Get started free

What Are Wake Words?

Imagine you're at a party, and everyone's talking at once. There are so many conversations—how do you know if someone is talking to you? From the middle of the din, you hear someone call out your name. You immediately turn to see who it was and to listen for what they'll say next.

This is the real-life analog of a software wake word — a word (or set of words) that transitions voice technology from listening passively to actively attempting to recognize speech in order to act.

A wake word detector uses a machine learning model to process the sounds it is sent and listen for what can be thought of as its name. For example, you may respond to your first name, your family name, your full name, your nickname, and your honorific name. So can your software!

Why Should I Use a Wake Word?

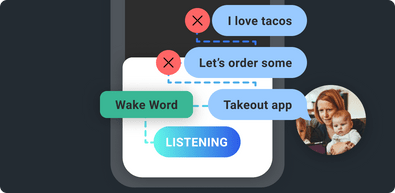

By using wake words, your software is both more privacy-minded (not transcribing speech that isn't directed at it) and more efficient (only acting when it's directly addressed).

Hands-Free

Accessible, safe, natural.

Edge-Based

Detection happens entirely on the device without accessing a network or cloud services.

Energy-Conscious

Only activating your software when it’s directly addressed processes audio as efficiently as possible and uses less power.

Cross-Platform

Train a model with our no-code AutoSpeech Maker and use it across all of our platforms.

Privacy-Minded

Rather than listen to audio, only answer “Did I hear one of the names you trained me to listen for?”

How Do Wake Words Work?

Wake words utilize a wake word detector, which in Spokestack employs machine learning models trained to constantly analyze input from a microphone for specific sounds (like what you can train and build with no code using Spokestack Maker or Spokestack Pro). These models work in tandem with a Voice Activity Detector to:

- Detect human speech

- Detect if preset name, short phrase, or word is said

- Send trigger event to Spokestack's Speech Pipeline to respond

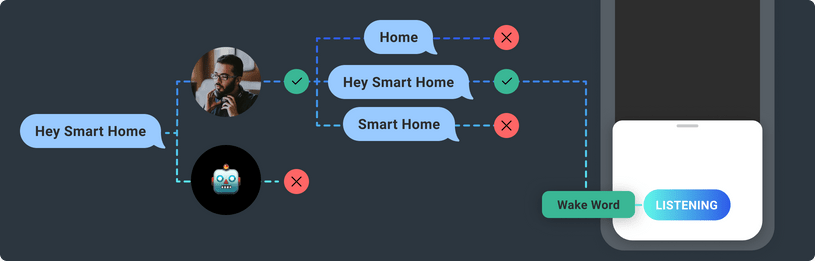

A wake word detector is a type of binary classifier. Some are built to recognize a single word (or class), and some can recognize several. During training, the detector hears many examples of the desired wake word(s), many examples of other words, and background noise, and it learns to tell the difference between them. Spokestack's wake word detectors use a series of three neural models to filter user speech to isolate the most important frequency components, encode those for classification, and detect the presence of a wake word in the encoded version. More detailed information about the models themselves can be found in our documentation. Splitting the task into three stages allows us to process audio as efficiently as possible, keeping the process quick and using less power.

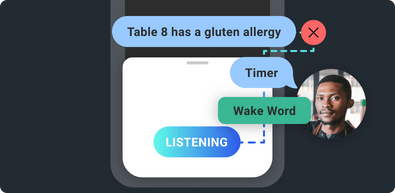

Wake Word Triggers

We first discussed how wake words trigger the rest of the speech pipeline (speech recognition, keyword recognition, and even natural language understanding). But a wake word response is not limited to just other voice technology components. A wake word can trigger any action in your software. For example, a wake word can activate a feature, or initiate a workflow. Wake word triggers are a powerful user interface.

Wake Word Detection

Software using Spokestack's wake word will need the device's microphone to be active in order to activate upon hearing a wake word, but it's important to understand that until the wake word is recognized, Spokestack isn't “listening” to the audio in any real sense. It's merely answering the question, “Did I just hear my name?” over and over again.

With Spokestack Maker or Spokestack Pro, you can create wake word models that can be trained to recognize a number of different phrases, or utterances, so your app can activate from different invocations without directly transcribing which one the user spoke. This contrasts with a keyword recognizer, which will give you a transcript of the user's speech.

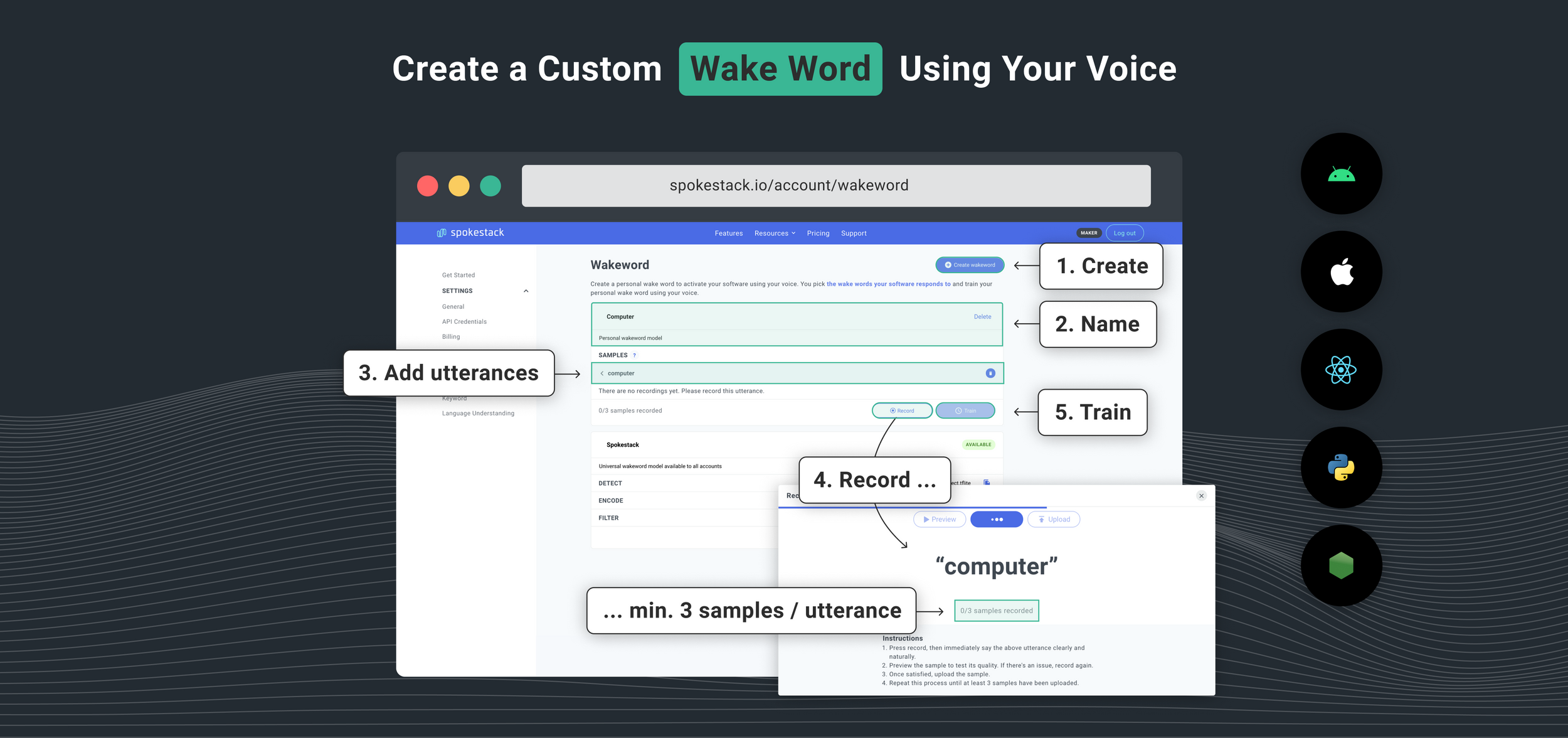

Creating a personal wake word model is straightforward using Spokestack Maker or Spokestack Pro, a microphone, and a quiet room.

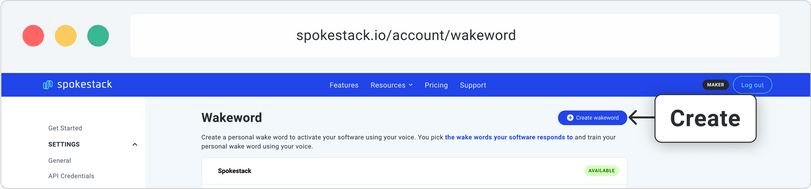

1 Create a Wake Word

First, head to the wake word builder and click Create wake word in the top right. A section for a new model will appear. Change the model's name.

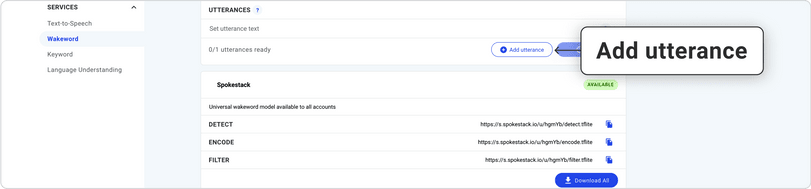

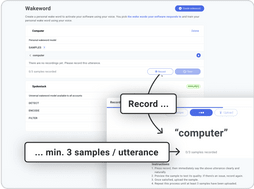

2 Add and Record Utterances

Then, look for the Utterances section. This is where you'll add the words or short phrases you want to trigger your app. Click Add utterance to compose your list; for each utterance you add, follow this process:

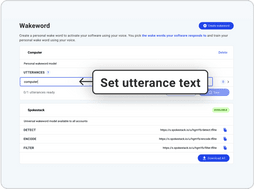

1. Set utterance text

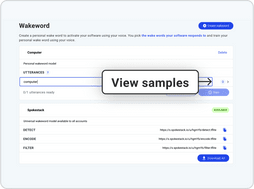

2. Click the arrow to the right of an utterance to view samples.

3. Click Record at the bottom of the box to add new samples.

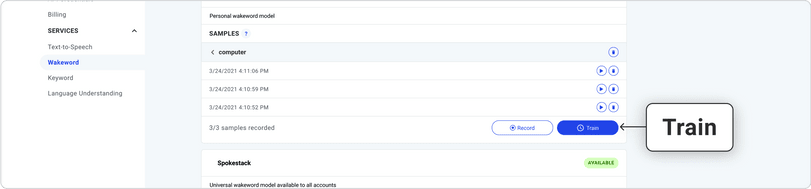

3 Train Your Model

When you've added as many different utterances as you want and recorded all your samples, click Train. In a few minutes, you'll be able to download and use your very own wake word model. You can retrain as many times as you like, adding or deleting both utterances and samples as necessary.

How Do I Use a Wake Word Model?

For mobile apps, integrate Spokestack Tray, a drop-in UI widget that manages voice interactions and delivers actionable user commands with just a few lines of code.

let pipeline = SpeechPipelineBuilder()

.addListener(self)

.useProfile(.tfliteWakewordAppleSpeech)

.setProperty("tracing", Trace.Level.PERF)

.setProperty("detectModelPath", "detect.tflite")

.setProperty("encodeModelPath", "encode.tflite")

.setProperty("filterModelPath", "filter.tflite")

.build()

pipeline.start()

Try a Wake Word in Your Browser

Test a wake word model by pressing “Start test,” then saying “Spokestack”. Wait a few seconds for results. This browser tester is experimental.

Say “Spokestack” when testing

Instructions

- Test a model by pressing "start test" above

- Then, try saying any of the utterances listed above. Wait a few seconds after saying an utterance for a confirmation to appear.

Full-Featured Platform SDK

Our native iOS library is written in Swift and makes setup a breeze.

Explore the docs